Arısoy Saraçlar, Ebru

Loading...

Profile URL

Name Variants

Arısoy, Ebru

Job Title

Email Address

saraclare@mef.edu.tr

Main Affiliation

02.05. Department of Electrical and Electronics Engineering

Status

Current Staff

Website

ORCID ID

Scopus Author ID

Turkish CoHE Profile ID

Google Scholar ID

WoS Researcher ID

Sustainable Development Goals

1

1NO POVERTY

0

Research Products

2

2ZERO HUNGER

0

Research Products

3

3GOOD HEALTH AND WELL-BEING

0

Research Products

4

4QUALITY EDUCATION

1

Research Products

5

5GENDER EQUALITY

0

Research Products

6

6CLEAN WATER AND SANITATION

0

Research Products

7

7AFFORDABLE AND CLEAN ENERGY

0

Research Products

8

8DECENT WORK AND ECONOMIC GROWTH

0

Research Products

9

9INDUSTRY, INNOVATION AND INFRASTRUCTURE

0

Research Products

10

10REDUCED INEQUALITIES

0

Research Products

11

11SUSTAINABLE CITIES AND COMMUNITIES

0

Research Products

12

12RESPONSIBLE CONSUMPTION AND PRODUCTION

0

Research Products

13

13CLIMATE ACTION

0

Research Products

14

14LIFE BELOW WATER

0

Research Products

15

15LIFE ON LAND

0

Research Products

16

16PEACE, JUSTICE AND STRONG INSTITUTIONS

0

Research Products

17

17PARTNERSHIPS FOR THE GOALS

0

Research Products

Documents

42

Citations

1382

h-index

14

Documents

30

Citations

642

Scholarly Output

22

Articles

0

Views / Downloads

3770/2540

Supervised MSc Theses

0

Supervised PhD Theses

0

WoS Citation Count

85

Scopus Citation Count

47

Patents

0

Projects

3

WoS Citations per Publication

3.86

Scopus Citations per Publication

2.14

Open Access Source

5

Supervised Theses

0

| Journal | Count |

|---|---|

| 2020 28th Signal Processing and Communications Applications Conference (SIU) | 3 |

| Turkish Natural Language Processing | 2 |

| 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation, LREC-COLING 2024 - Main Conference Proceedings -- Joint 30th International Conference on Computational Linguistics and 14th International Conference on Language Resources and Evaluation, LREC-COLING 2024 -- 20 May 2024 through 25 May 2024 -- Hybrid, Torino -- 199620 | 1 |

| 26th IEEE Signal Processing and Communications Applications Conference, SIU 2018 -- 26th IEEE Signal Processing and Communications Applications Conference, SIU 2018 -- 2 May 2018 through 5 May 2018 -- Izmir -- 137780 | 1 |

| Conference: 16th Annual Conference of the International-Speech-Communication-Association (INTERSPEECH 2015) Location: Dresden, GERMANY Date: SEP 06-10, 2015 | 1 |

Current Page: 1 / 3

Scopus Quartile Distribution

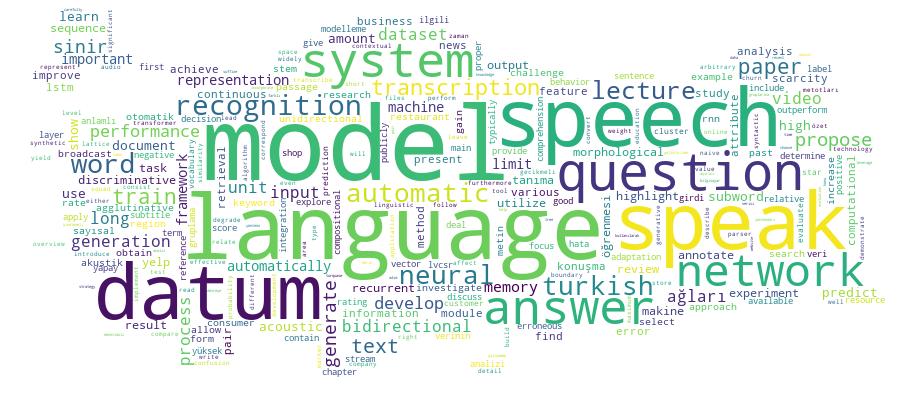

Competency Cloud

22 results

Scholarly Output Search Results

Now showing 1 - 10 of 22

Conference Object Citation - WoS: 60Bidirectional Recurrent Neural Network Language Models for Automatic Speech Recognition(IEEE, 2015) Chen, Stanley; Sethy, Abhinav; Ramabhadran, Bhuvana; Arısoy, EbruRecurrent neural network language models have enjoyed great success in speech recognition, partially due to their ability to model longer-distance context than word n-gram models. In recurrent neural networks (RNNs), contextual information from past inputs is modeled with the help of recurrent connections at the hidden layer, while Long Short-Term Memory (LSTM) neural networks are RNNs that contain units that can store values for arbitrary amounts of time. While conventional unidirectional networks predict outputs from only past inputs, one can build bidirectional networks that also condition on future inputs. In this paper, we propose applying bidirectional RNNs and LSTM neural networks to language modeling for speech recognition. We discuss issues that arise when utilizing bidirectional models for speech, and compare unidirectional and bidirectional models on an English Broadcast News transcription task. We find that bidirectional RNNs significantly outperform unidirectional RNNs, but bidirectional LSTMs do not provide any further gain over their unidirectional counterparts.Conference Object Citation - Scopus: 2Dealing With Data Scarcity in Spoken Question Answering(European Language Resources Association (ELRA), 2024) Arısoy, Ebru; Özgür, Arzucan; Ünlü Menevşe, Merve; Manav, Yusufcan; Menevse, Merve Unlu; Manavi, YusufcanThis paper focuses on dealing with data scarcity in spoken question answering (QA) using automatic question-answer generation and a carefully selected fine-tuning strategy that leverages limited annotated data (paragraphs and question-answer pairs). Spoken QA is a challenging task due to using spoken documents, i.e., erroneous automatic speech recognition (ASR) transcriptions, and the scarcity of spoken QA data. We propose a framework for utilizing limited annotated data effectively to improve spoken QA performance. To deal with data scarcity, we train a question-answer generation model with annotated data and then produce large amounts of question-answer pairs from unannotated data (paragraphs). Our experiments demonstrate that incorporating limited annotated data and the automatically generated data through a carefully selected fine-tuning strategy leads to 5.5% relative F1 gain over the model trained only with annotated data. Moreover, the proposed framework is also effective in high ASR errors. © 2024 ELRA Language Resource Association: CC BY-NC 4.0.Conference Object Citation - WoS: 1Citation - Scopus: 2Improving the Usage of Subword-Based Units for Turkish Speech Recognition(IEEE, 2020) Çetinkaya, Gözde; Saraçlar, Murat; Arısoy, EbruSubword units are often utilized to achieve better performance in speech recognition because of the high number of observed words in agglutinative languages. In this study, the proper use of subword units is explored in recognition by a reconsideration of details such as silence modeling and position-dependent phones. A modified lexicon by finite-state transducers is implemented to represent the subword units correctly. Also, we experiment with different types of word boundary markers and achieve the best performance by adding a marker both to the left and right side of a subword unit. In our experiments on a Turkish broadcast news dataset, the subword models do outperform word-based models and naive subword implementations. Results show that using proper subword units leads to a relative word error rate (WER) reductions, which is 2.4%, compared with the word level automatic speech recognition (ASR) system for Turkish.Conference Object Citation - WoS: 1Citation - Scopus: 1Domain Adaptation Approaches for Acoustic Modeling(IEEE, 2020) Arısoy, Ebru; Fakhan, EnverIn the recent years, with the development of neural network based models, ASR systems have achieved a tremendous performance increase. However, this performance increase mostly depends on the amount of training data and the computational power. In a low-resource data scenario, publicly available datasets can be utilized to overcome data scarcity. Furthermore, using a pre-trained model and adapting it to the in-domain data can help with computational constraint. In this paper we have leveraged two different publicly available datasets and investigate various acoustic model adaptation approaches. We show that 4% word error rate can be achieved using a very limited in-domain data.Conference Object Citation - WoS: 2Citation - Scopus: 2Turkish Broadcast News Transcription Revisited(IEEE, 2018) Saraçlar, Murat; Arısoy, EbruBu çalışmada yaklaşık on yıl önce gerçeklenen Türkçe haber programları için otomatik konuşma tanımayla yazılandırma sistemi güncel yöntemlerle yenilenerek aynı veri üzerindeki başarımı ölçülmüştür. Son yıllarda yapay sinir ağları temelli derin öğrenme yöntemleri konu¸sma tanıma hata oranlarında belirgin bir iyileşme sağlamıştır ve günümüzde yaygın olarak kullanılmaktadır. Bu bildiride geliştirilen konu¸sma tanıma sisteminin temel bileşenleri olan akustik ve dil modelleri için sinir ağları kullanılmıştır. Akustik modelleme için derin sinir a^gları hem çapraz entropi hem de ayırıcı dizi amaç işlevleriyle eniyilenmiştir. Ayrıca uzun süreli bağımlılıkları modellemek için yinelemeli sinir ağlarına benzer bir başarım gösteren ama daha çabuk eğitilebilen zaman gecikmeli sinir ağları kullanılmıştır. Daha sonra bunların ayırıcı eğitimle eniyilenmesi sonucunda en düşük hata oranlarına ulaşılmoştır. Dil modeli için ise yinelemeli sinir ağları kullanılmıştır. Bu yeni sinir ağları kullanan modeller ile kelime hata oranlarının yarılandığıve %10’un altına düştüğü gözlemlenmiştir.Conference Object Highlighting of Lecture Video Closed Captions(IEEE, 2020) Yıldırım, Göktuğ; Öztufan, Huseyin Efe; Arısoy, Ebru; Yildirm, GoktugThe main purpose of this study is to automatically highlight important regions of lecture video subtitles. Even though watching videos is an effective way of learning, the main disadvantage of video-based education is limited interaction between the learner and the video. With the developed system, important regions that are automatically determined in lecture subtitles will be highlighted with the aim of increasing the learner's attention to these regions. In this paper first the lecture videos are converted into text by using an automatic speech recognition system. Then continuous space representations for sentences or word sequences in the transcriptions are generated using Bidirectional Encoder Representations from Transformers (BERT). Important regions of the subtitles are selected using a clustering method based on the similarity of these representations. The developed system is applied to the lecture videos and it is found that using word sequence representations in determining the important regions of subtitles gives higher performance than using sentence representations. This result is encouraging in terms of automatic highlighting of speech recognition outputs where sentence boundaries are not defined explicitly.yl-bitirme-projesi.listelement.badge E-Commerce Customer Shurn Prediction Based Machine Learning Algortihms(MEF Üniversitesi, Fen Bilimleri Enstitüsü, 2018) Eser, Ahmet Yetkin; Arısoy Saraçlar, EbruWith the development and popularization of a digital world, human behavior has changed so remarkably. A lot of sectors affected because of this change. One of the most affected areas is the retail sector. People have left their regular shopping habits and started shopping on e-commerce sites. Thanks to increasing of variety and volume of collected data and velocity of new machines, companies can use sophisticated algorithms efficiently on their data. In this paper, we discuss about how companies can predict potential churned customers with machine learning methods.Conference Object Turkish Broadcast News Transcription Revisited(Institute of Electrical and Electronics Engineers Inc., 2018) Arisoy, Ebru; Saraglar, MuratConference Object Citation - WoS: 1Evaluating Large Language Models in Data Generation for Low-Resource Scenarios: A Case Study on Question Answering(International Speech Communication Association, 2025) Arisoy, Ebru; Menevse, Merve Unlu; Manav, Yusufcan; Ozgur, ArzucanLarge Language Models (LLMs) are powerful tools for generating synthetic data, offering a promising solution to data scarcity in low-resource scenarios. This study evaluates the effectiveness of LLMs in generating question-answer pairs to enhance the performance of question answering (QA) models trained with limited annotated data. While synthetic data generation has been widely explored for text-based QA, its impact on spoken QA remains underexplored. We specifically investigate the role of LLM-generated data in improving spoken QA models, showing performance gains across both text-based and spoken QA tasks. Experimental results on subsets of the SQuAD, Spoken SQuAD, and a Turkish spoken QA dataset demonstrate significant relative F1 score improvements of 7.8%, 7.0%, and 2.7%, respectively, over models trained solely on restricted human-annotated data. Furthermore, our findings highlight the robustness of LLM-generated data in spoken QA settings, even in the presence of noise.Conference Object Citation - WoS: 3Citation - Scopus: 5Developing an Automatic Transcription and Retrieval System for Spoken Lectures in Turkish(IEEE, 2017) Arısoy, EbruWith the increase of online video lectures, using speech and language processing technologies for education has become quite important. This paper presents an automatic transcription and retrieval system developed for processing spoken lectures in Turkish. The main steps in the system are automatic transcription of Turkish video lectures using a large vocabulary continuous speech recognition (LVCSR) system and finding keywords on the lattices obtained from the LVCSR system using a speech retrieval system based on keyword search. While developing this system, first a state-of-the-art LVCSR system was developed for Turkish using advance acoustic modeling methods, then keywords were extracted automatically front word sequences in the reference transcriptions of video lectures, and a speech retrieval system was developed for searching these keywords in the lattice output of the LVCSR system. The spoken lecture processing system yields 14.2% word error rate and 0.86 maximum term weighted value on the test data.

- «

- 1 (current)

- 2

- 3

- »